VibeStack

An AI-powered aesthetic analysis engine that translates a mood or feeling into a structured creative brief — moodboard, color palette, and production-ready prompts for Midjourney, Stable Diffusion, Flux, and the web.

There is a gap that every creative project runs into eventually. You know exactly what something should feel like. You have a reference in your head — maybe it is a film still, a smell, a city at a specific hour. But the moment someone asks you to explain it, or the moment you try to hand it off to a designer or an AI tool, the feeling collapses into vague words that do not carry the weight you intended.

VibeStack is my attempt to close that gap.

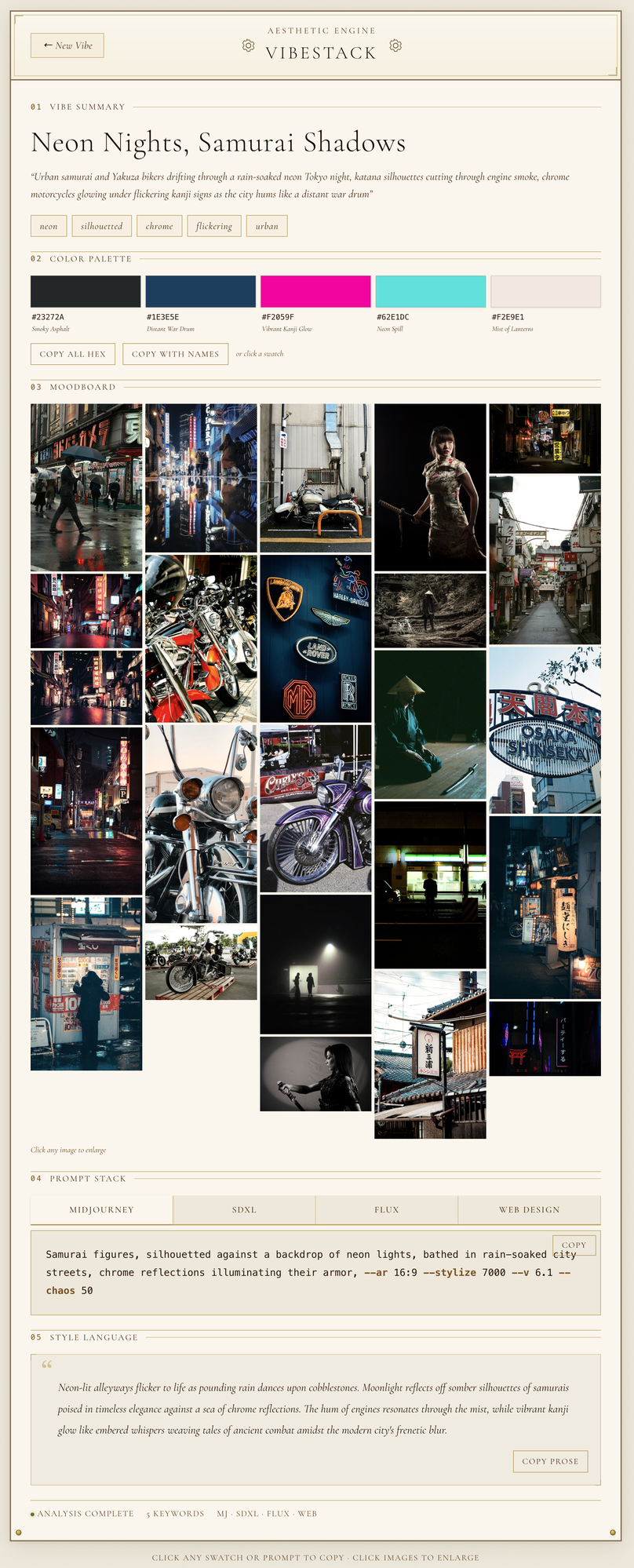

You type a description the way you would say it out loud. Something like “brutalist Tokyo at 3am, neon on wet concrete, Blade Runner but make it fashion.” The engine takes that phrase and runs it through a multi-stage pipeline designed to extract, visualise, and structure the aesthetic intent hiding inside it.

The first stage parses the vibe. A language model reads the input and extracts semantic structure: keywords, emotional register, visual references, and color language. From there the engine reaches out to Unsplash and Pexels and pulls real photographs that match that aesthetic. These are not AI-generated images but actual moodboard material — the kind a creative director would gather manually.

Those images get embedded into a vector space using Sentence Transformers and then clustered with KMeans to identify the dominant visual threads. A color palette is derived directly from the clustered imagery, so the hex codes reflect what is actually in the reference material rather than what a language model guesses should be there.

The final stage synthesises four production-ready creative prompts from everything gathered so far: one each for Midjourney, Stable Diffusion with SDXL, Flux, and a web design brief written for developers or AI coding tools. The web brief is the part I find most useful personally — it is the kind of structured spec you can paste directly into a tool like Claude Code and immediately get something that feels right.

The whole process takes about twenty seconds.

Built with FastAPI on the backend, Next.js on the frontend, deployed via AWS App Runner with images stored on S3 and served through CloudFront.